US Foreign Policy

Research Methods Primer

Professor

Department of Political Science

011C Calvin Hall

meflynn@ksu.edu

2024-09-17

Overview

What do we want to get out of today’s class?

- Learn how to read a research paper

- An understanding of some of the general methods we use in research

- Learn the basics of how to interpret statistical models

- Some of the different types of bias and ways that data can fool us

- Learn the importance of theory

Reading Research

The Anatomy of a Research Paper

How you’ll want to read it:

- Abstract

- Introduction

- Theory

- Research Design

- Results

- Conclusion

The Anatomy of a Research Paper

How you’ll want to read it:

- Abstract

- Introduction

- Theory

- Research Design

- Results

- Conclusion

How you should read it (as a rough guide):

- Abstract

- Introduction

- Conclusion

- Results

- Research Design

- Theory

Methods

Common Methods

We use lots of different methods in the social sciences, but let’s look at a few key approaches/tools:

- Statistical modeling and regression analysis

- Formal (mathematical) modeling

- Experimental methods

- Case studies

- Field work

- Surveys

- Archival research

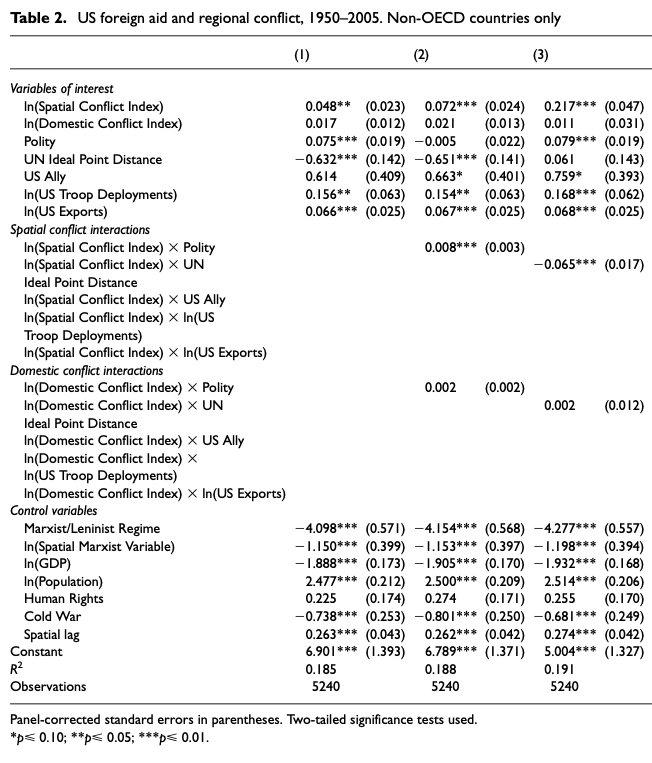

Interpretation

Interpreting and Assessing Statistical Models

First, what are our goals?

- Prediction?

- Description?

- Estimating causal effects?

Interpreting and Assessing Statistical Models

Prediction

Overall model fit

In-sample fit?

Out-of-sample fit?

Description

Overall model fit

Individual coefficients

Associations between groups

Causal Inference

- Focus on individual coefficients for causal effects

Interpretting and Assessing Statistical Models

What is a variable?

A variable is a characteristic, trait, attribute, or condition that can be measured or observed

A “definable quantity that can take on two or more values” (Kellstedt and Whitten 2018, 22).

Examples:

- Age

- Income

- Education

- Speed of a moving vehicle

- Color a car is painted

- Whether a person is a Democrat or Republican

- If a member of Congress served in the military or not

Interpreting and Assessing Statistical Models

What is a coefficient?

\[\begin{equation} Y_i = \alpha + {\color{blue} \beta_1} X_i + {\color{blue}\beta_2} Z_i + \epsilon_i \end{equation}\]

The correlation between \(X\) and \(Y\), conditional on other predictors and model

Typically denotes the magnitude of the change in \(Y\) that corresponds to a one-unit change in \(X\)

In some cases this is direct and easy, less so in other cases

Different models and measurement schemes mean we need to be cautious in interpreting coefficients

Interpreting and Assessing Statistical Models

Sources of Bias

What’s “Bias”?

Bias: Systematic error of some sort that can affect our inferences and conclusions (Howard 2017, 151)

What it’s not:

- Presenting “one side”

- “Bias” in the colloquial cable news meaning of “Anything I don’t like” or “Unflattering coverage of people and things I do like.”

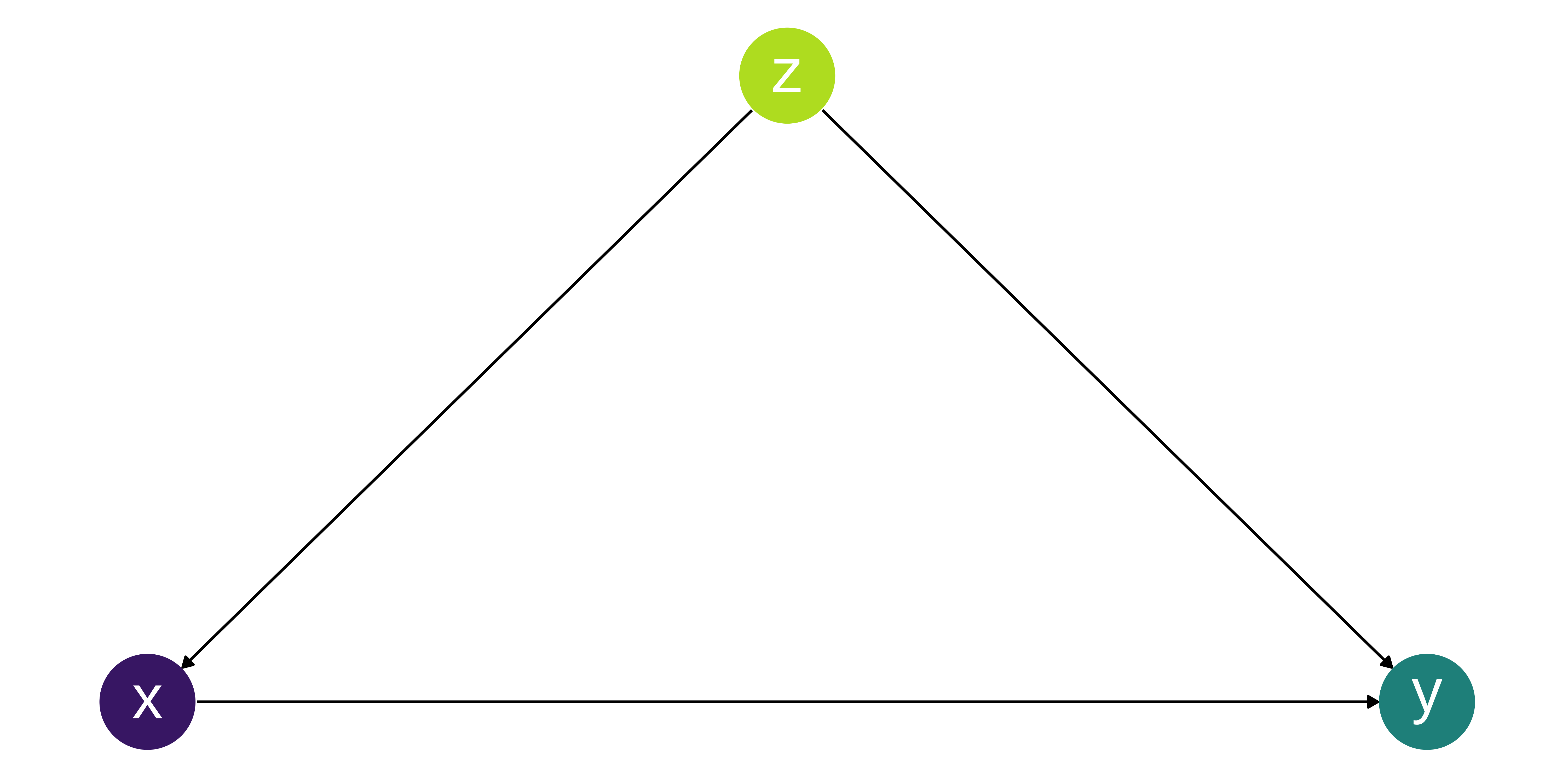

Confounding

What is confounding?

Confounding is when the predictor (i.e. X) and the outcome (i.e. Y) share a common ancestor (i.e. Z).

If we don’t take into account the relationship between Z and the other variables, our estimates of X are biased.

The most common way to do this is to include Z into our model

\[\begin{align} Y & = \alpha + \beta_1 X_i + \epsilon_i \\ vs \\ Y & = \alpha + \beta_1 X_i + \beta_2 Z_i + \epsilon_i \end{align}\]

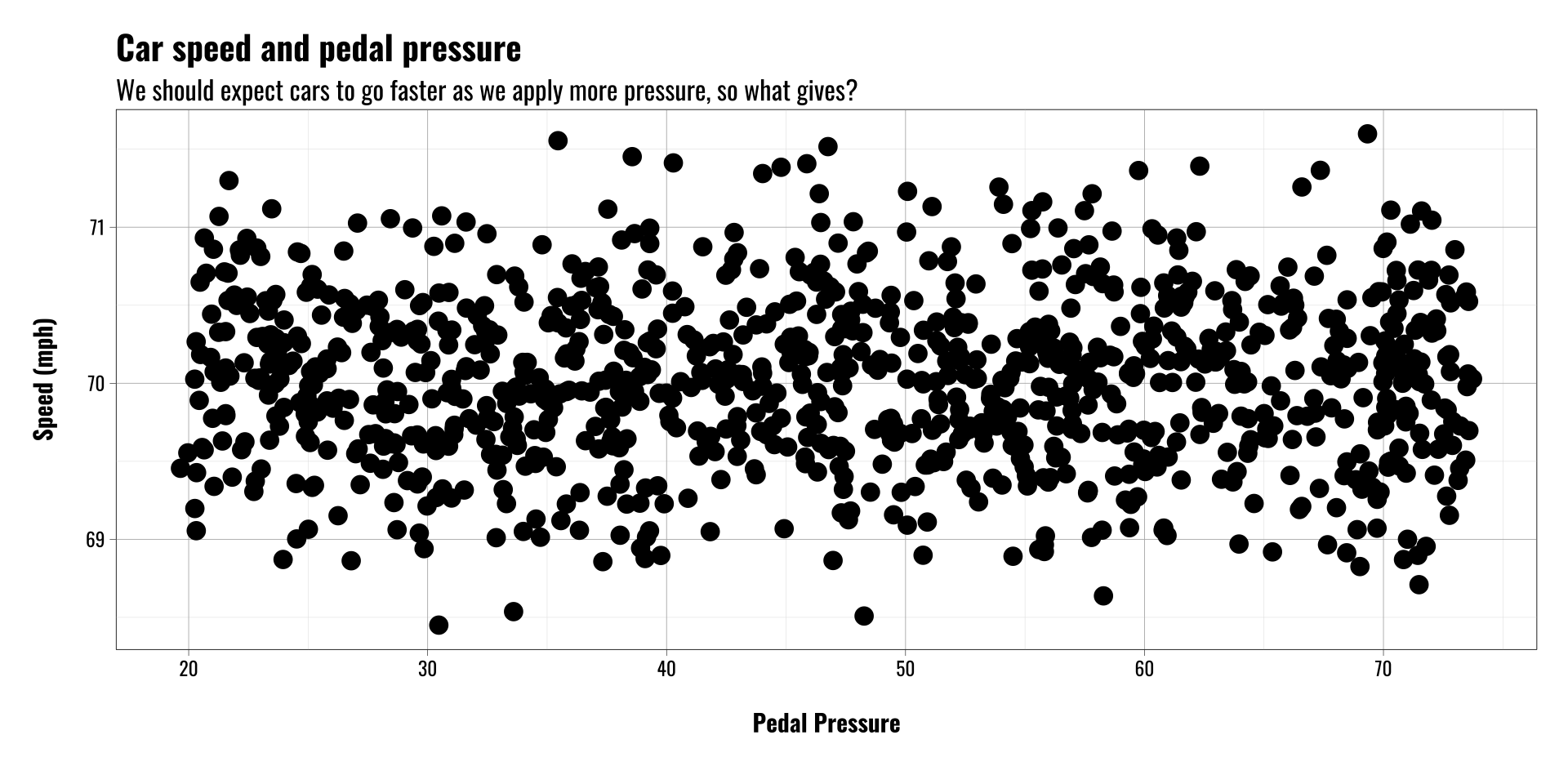

Confounding Example

Let’s imagine we’re interested in the relationship between gas pedal pressure and speed, so we collect data on

- A few hundred drivers

- Their speed in mph

- How hard they press the pedal

Call:

lm(formula = speed ~ pedal_pressure, data = cardata)

Coefficients:

(Intercept) pedal_pressure

70.0441843 -0.0004776

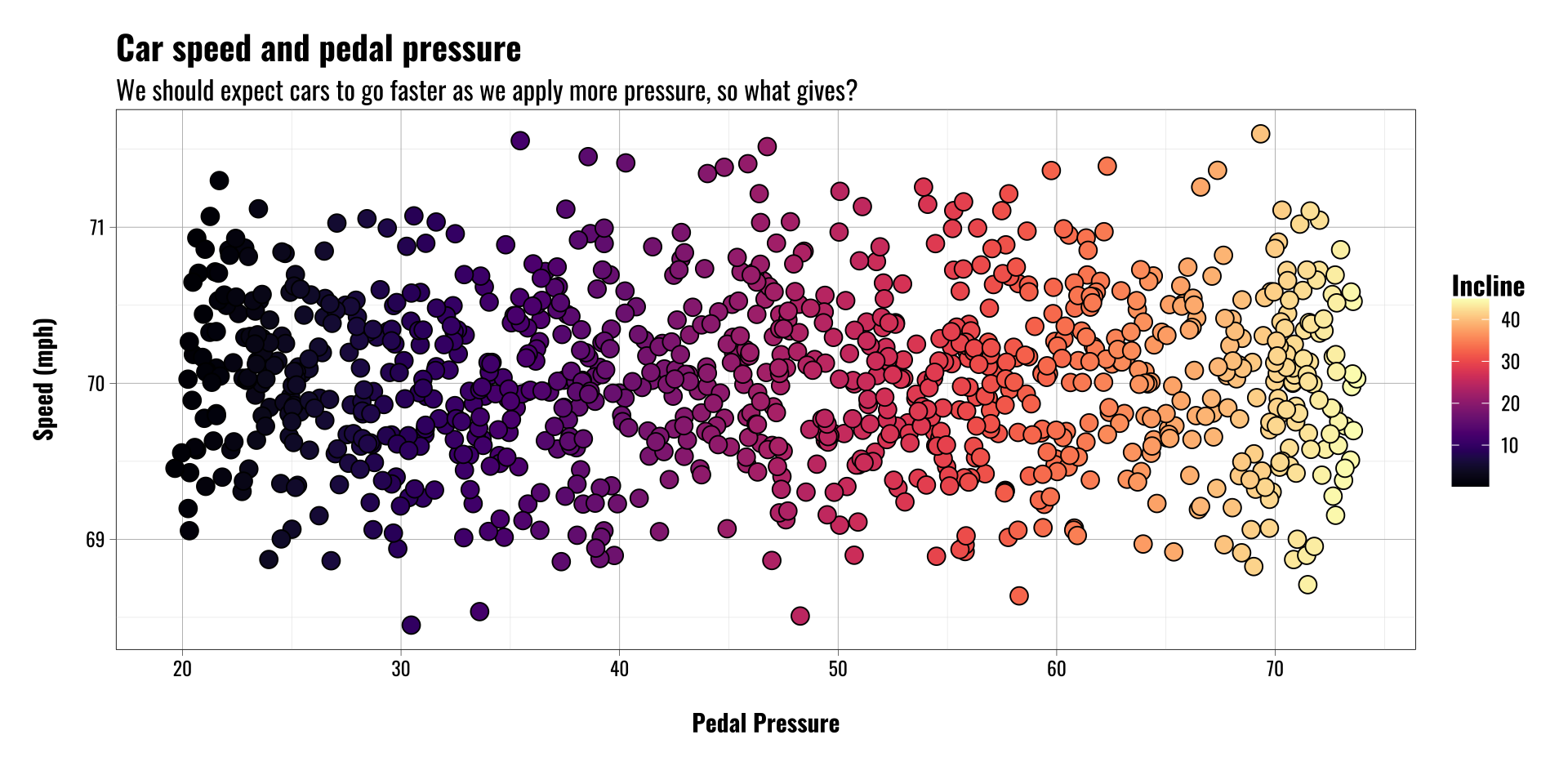

Confounding Example

Confounding can mask causal relationships

We need to understand the data generating process to help us make sense of results that may or may not themselves make sense

Adjusting for confounders in our model can reduce bias and help us better estimate causal relationships

Call:

lm(formula = speed ~ pedal_pressure + incline, data = cardata)

Coefficients:

(Intercept) pedal_pressure incline

45.110 1.244 -1.492

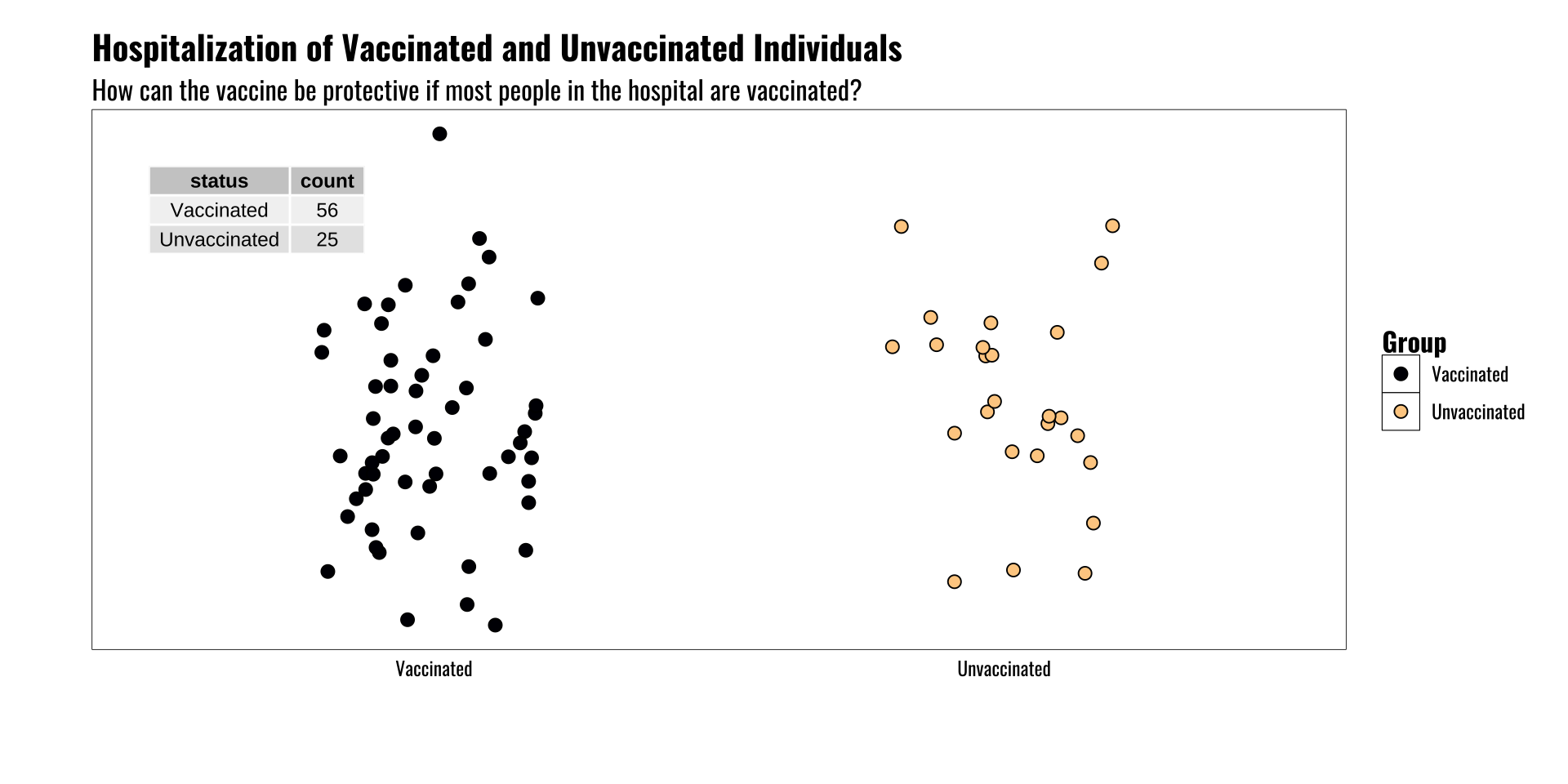

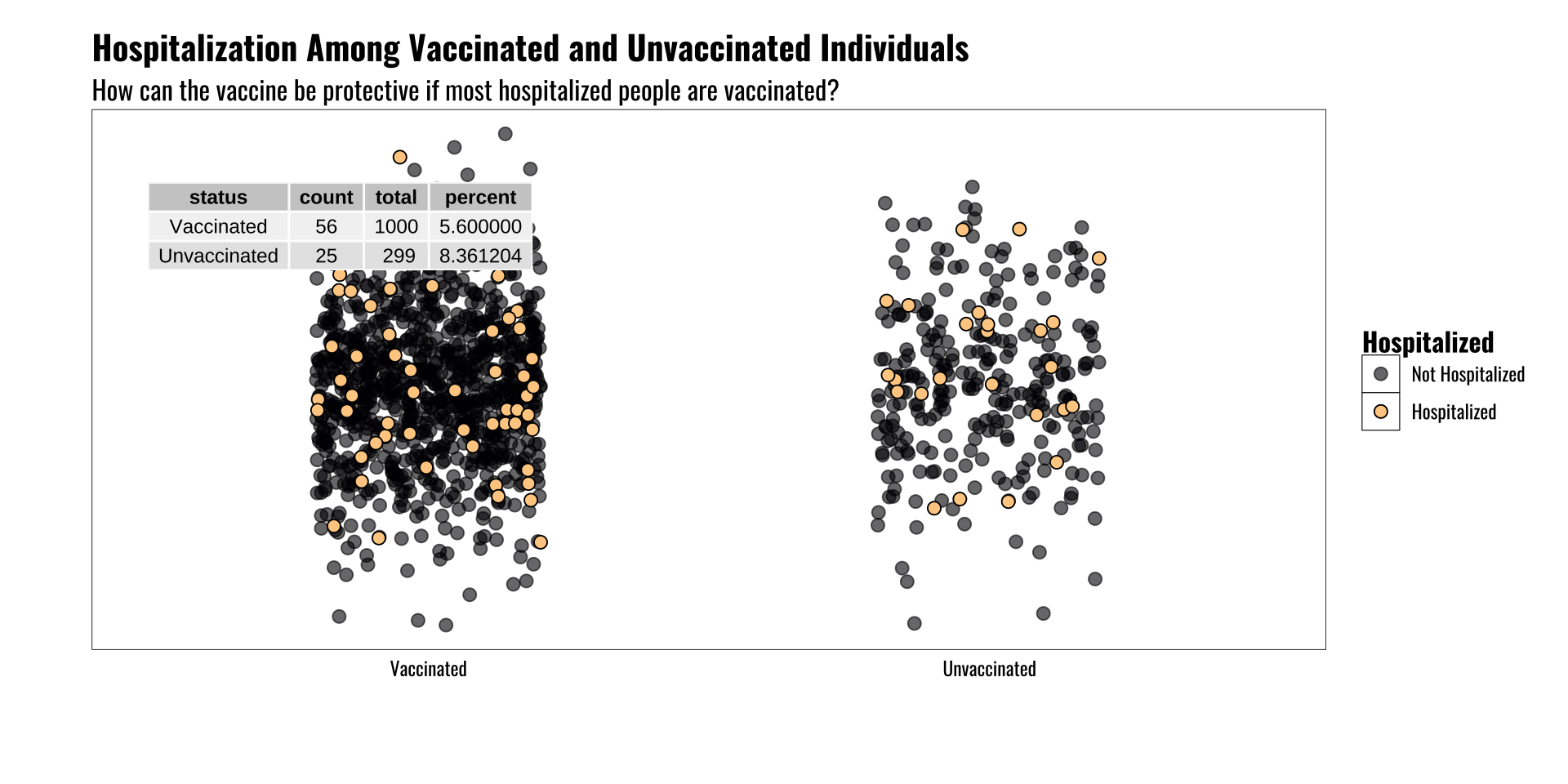

Base Rate Fallacy

What is it and why does it matter?

- Counts can be deceptive without information about population of interest

- Example of vaccine status and hospitalization

- Vaccines are effective at reducing risk of severe illness, hospitalization, etc.

- And yet in some locations we see more hospital beds occupied by vaccinated people than non-vaccinated people

- Huh? What gives?

Base Rate Fallacy

The underlying population matters!

The rate of an event may vary across groups

Knowing something about the reference population is key

Another way to think of this is the symmetry/asymmetry of conditional probabilities

\[Pr(H \mid V) \neq Pr(V \mid H)\]

Ecological Fallacy

What is it and why does it matter?

- The level at which our data are aggregated matters

- Drawing inferences across levels of aggregation can be tricky

- For example, income and voting behavior in US elections

- Wealthier states tend to break for Democratic candidates

- Wealthier individuals tend to break for Republican candidates

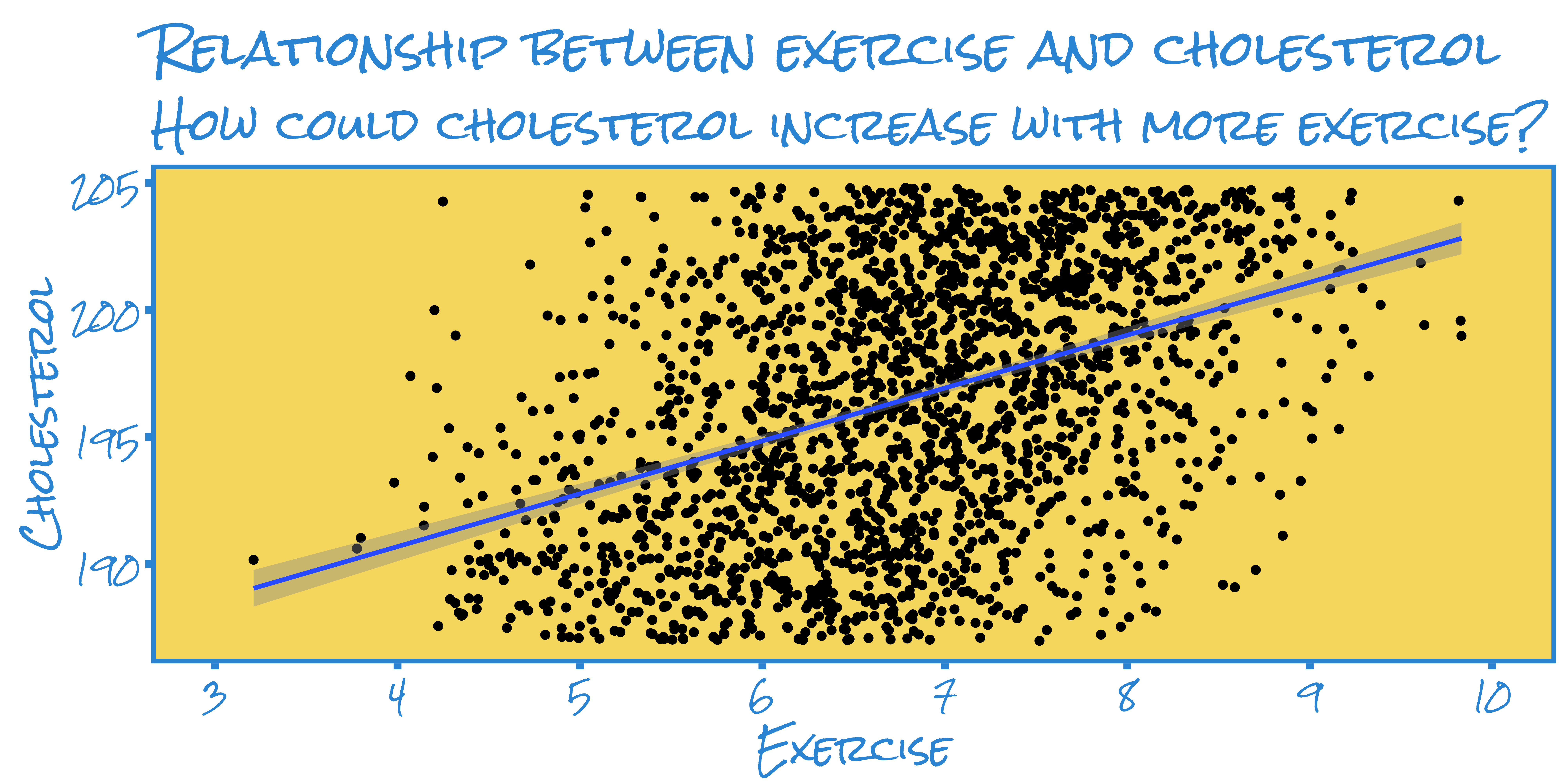

Simpson’s Paradox

What is it and why does it matter?

Simpson’s Paradox is a special case of confounding

Simple bivariate relationship between X and Y may appear to show one thing

But when we adjust for other relevant variables (i.e. Z) the apparent relationship between X and Y reverses

Common in lots of fields of study

Simpson’s Paradox

Example:

Imagine we have data on the exercise activity and cholesterol of a few hundred adults

We plot the individuals’ cholesterol levels against their level of exercise (we’ll assume we directly observe this and it’s not self-reported)

In the plot we see that exercise appears to correlate positively with cholesterol levels. A simple regression model supports this eyeball estimate.

Call:

lm(formula = y ~ x, data = data2)

Coefficients:

(Intercept) x

182.372 2.078

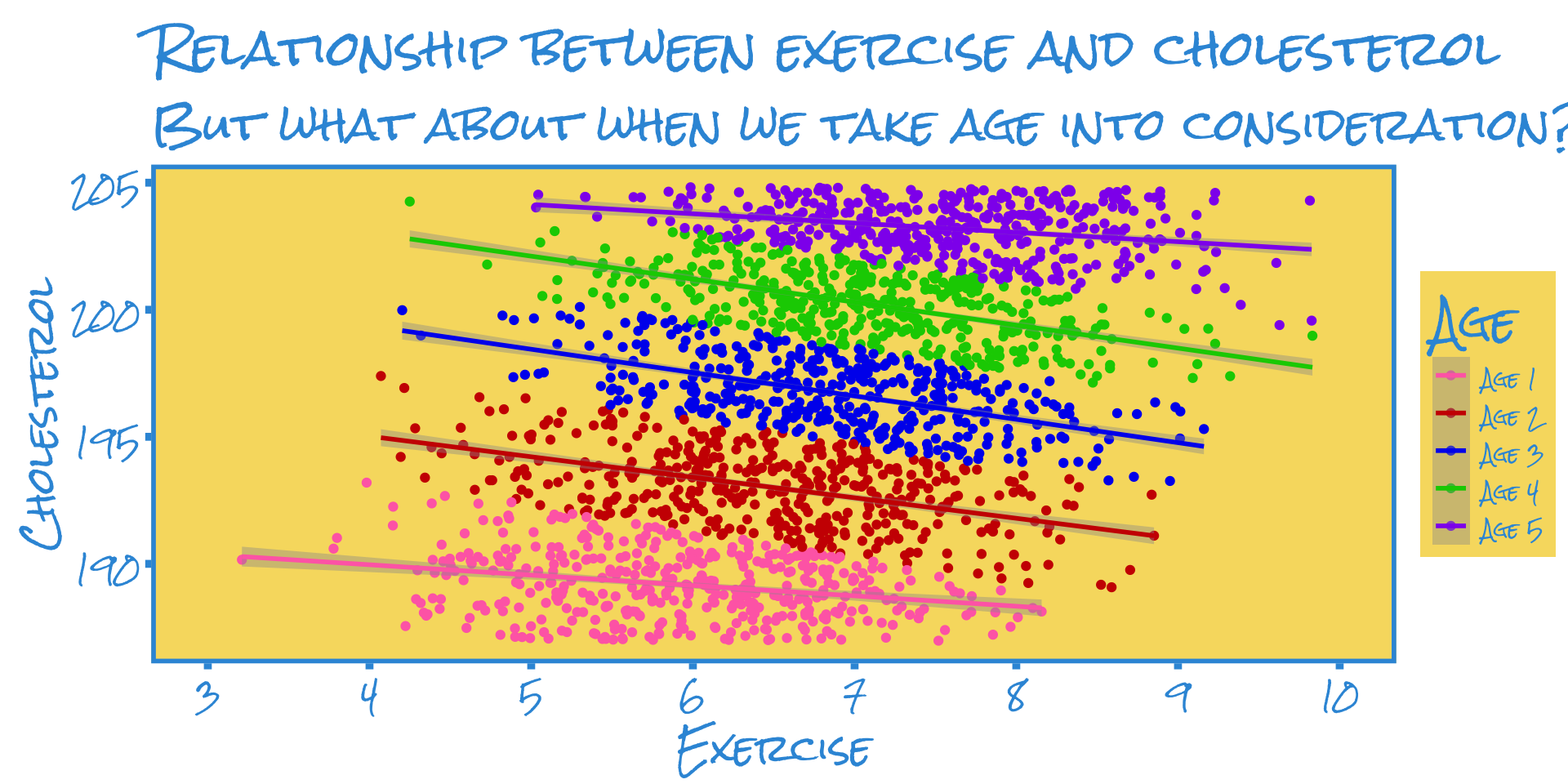

Simpson’s Paradox

Example:

But what if we’re omitting some key variables?

It’s possible for results to reverse if we’re omitting a variable that could also affect cholesterol levels

Another way to say this is that the effect of exercise might be biased because we’re leaving other important things out of the model.

One good variable would be patient age, so let’s see what that looks like

Call:

lm(formula = y ~ x + age, data = data2)

Coefficients:

(Intercept) x ageAge 2 ageAge 3 ageAge 4 ageAge 5

193.1930 -0.6774 4.2166 8.1891 11.8306 15.1400

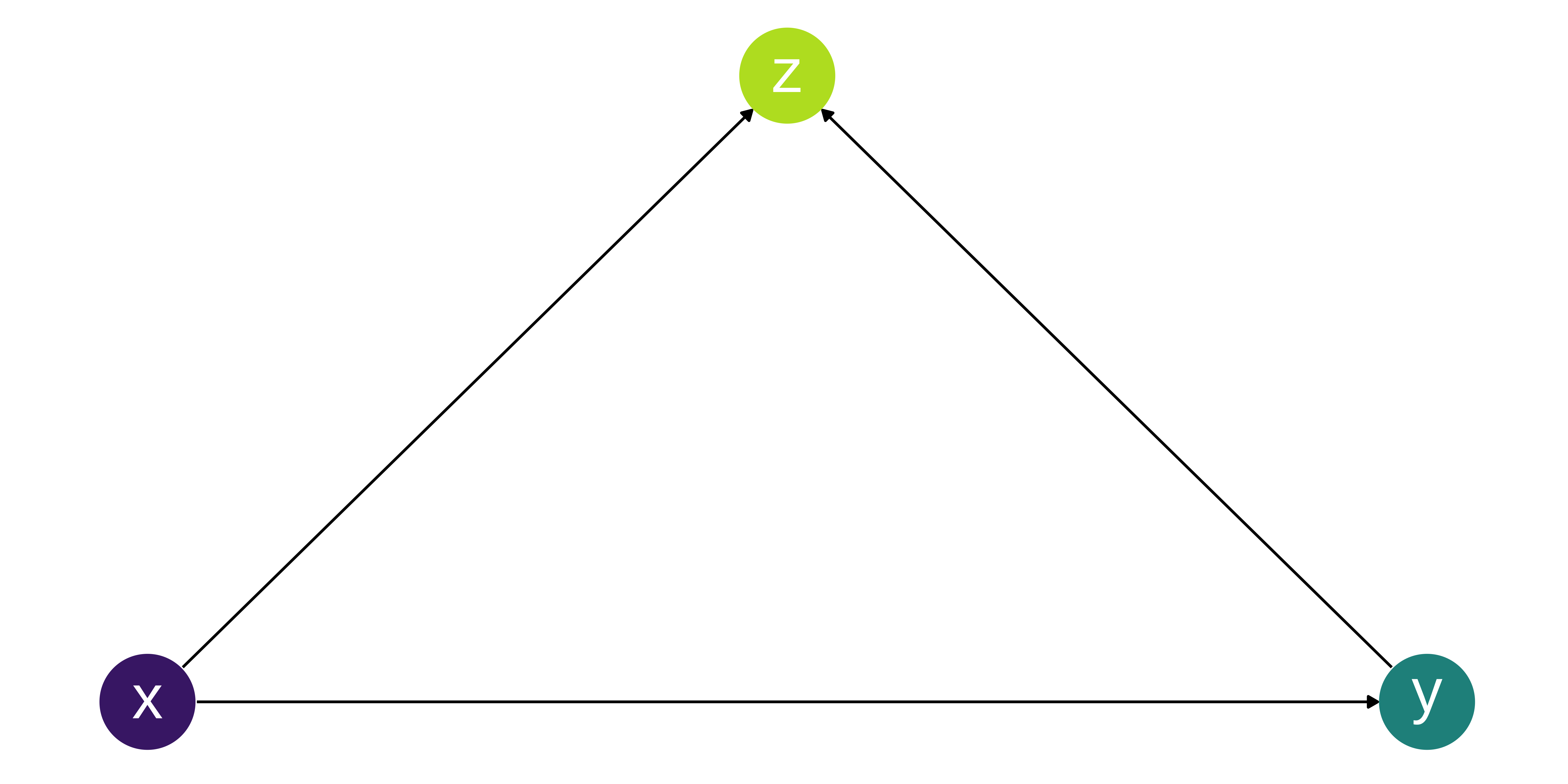

Collider Bias

What is collider bias?

Collider bias can be introduced by adjusting for a common effect.

It can also be a form of selection bias.

Similar to Simpson’s Paradox it can make effects appear to be the opposite of what they are, but also depends on the population of interest.

In this example Z is a collider because both X and Y cause Z.

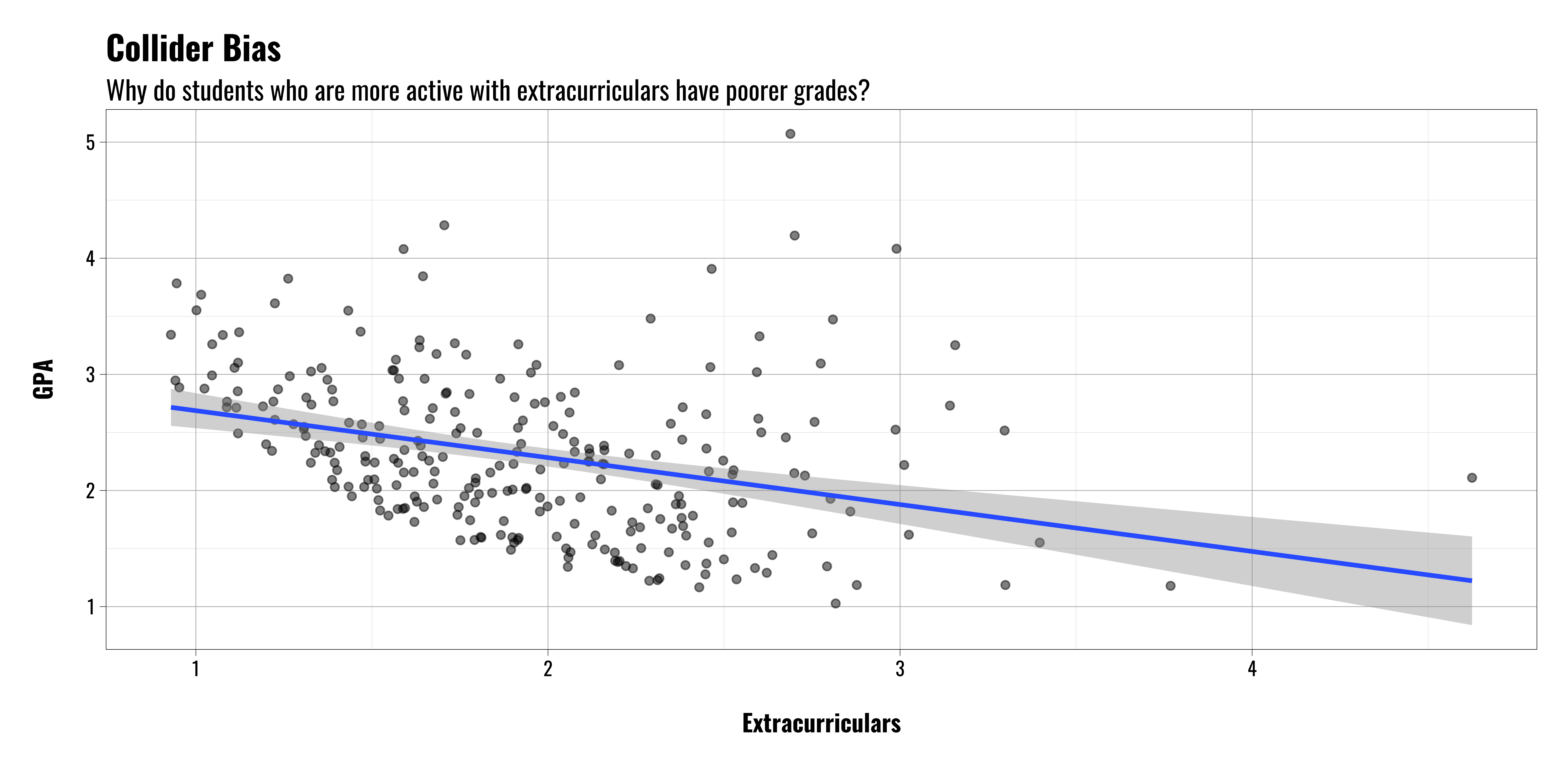

Collider Bias

Example: College Admissions

Let’s assume we’re interested in looking at students who have been admitted to college to see what the relationship is between their high school extracurricular activities and GPA

We collect data on college freshman and compare their extracurricular activities in high school with their GPA.

Surprisingly, we find a negative relationship.

That’s sad.

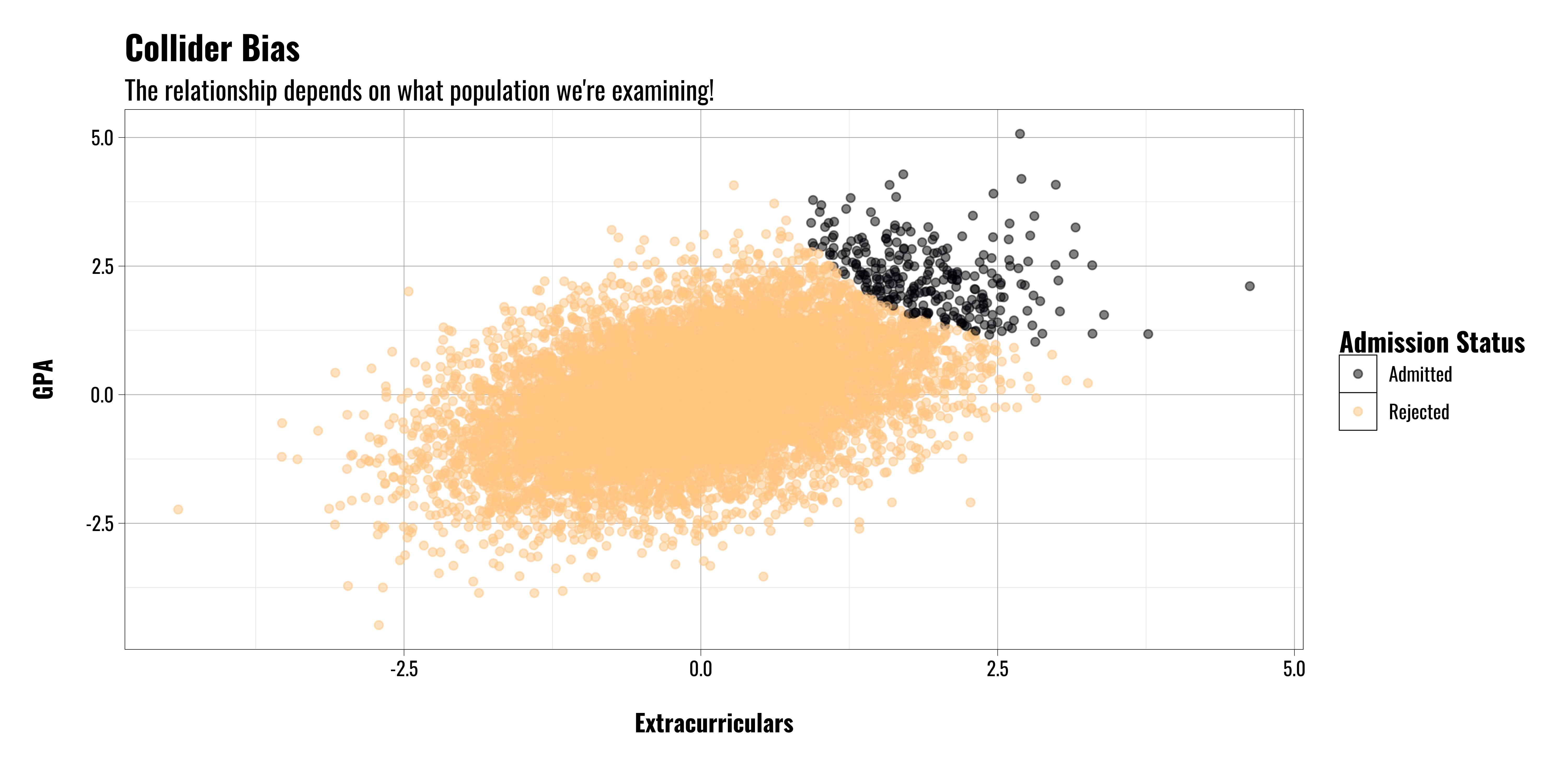

Collider Bias

Example: College Admissions

But wait!

If we’re looking at students who have already been admitted to college we’re implicitly conditioning on a collider variable (i.e. acceptance/admission status)

If we step back and look at the entire population of high school students we find something different

Students who have better grades and/or better extracurricular activities are more likely to be admitted to college in the first place.

Role of Theory

The Role of Theory

What is theory?

Let’s start with what it’s not

- Not a hypothesis

- Not a hunch

- Not a guess

- Just an idea with little or no supporting evidence

The Role of Theory

What is theory?

More accurately, theory is the story we tell about which variables are important and how those variables are related.

It both describes and explains how variables affect one another.

It also allows us to make predictions about what we should expect to see in various scenarios

Theory: A tentative conjecture about the causes of some phenomenon of interest (Kellstedt and Whitten 2019, 22)

Theory: A logically consistent set of statements that explains a phenomenon of interest (Frieden, Lake, and Schultz 2022, xxix)